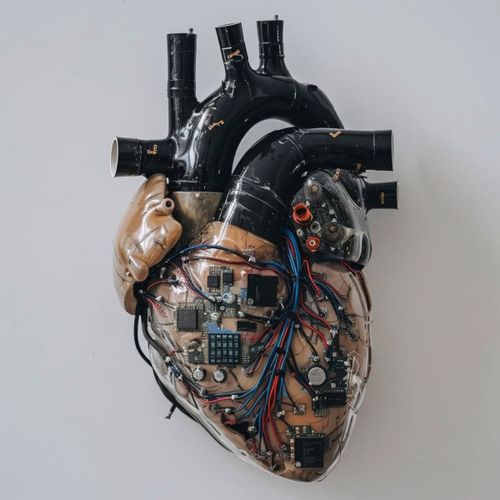

Healthcare in 2026 is no longer just experimenting with artificial intelligence. It is quietly reorganizing itself around it. Not in the dramatic “AI replaces doctors” sense that dominated early headlines, but in a more subtle and consequential way: AI is becoming embedded in the everyday machinery of diagnosis, documentation, triage, and decision support. Below are the core narratives where it actually works.

AI is becoming the invisible diagnostic layer

One of the clearest transformations is happening in diagnostics, especially in imaging and pattern recognition. AI systems are increasingly used as a “second reader” in radiology, dermatology, and pathology — scanning images before or alongside human specialists and flagging anomalies that may require attention.

This is not theoretical: systematic reviews already show that AI tools can improve detection performance in specific clinical settings, particularly in imaging-heavy fields where pattern recognition is central. A broad overview of clinical AI applications in healthcare highlights measurable improvements in detection and workflow efficiency, especially when AI is used as an assistive layer rather than a standalone decision-maker:

At the same time, benchmark studies of large medical AI models suggest that in structured diagnostic environments, these systems can reach or even exceed human-level performance. One recent study evaluating multimodal AI reasoning in medical diagnosis reported high accuracy in controlled test conditions:

But the key tension is already visible: performance in curated datasets does not always translate to messy clinical reality. Hospitals are not exams, and patients rarely present like textbook cases.

The myth of the “AI doctor” is being replaced by something more practical

The early narrative of fully autonomous AI doctors has largely faded in serious medical research. Instead, what is scaling rapidly is something less cinematic but far more impactful: AI as clinical infrastructure.

In practice, this means systems that generate clinical notes, summarize patient histories, assist with differential diagnoses, and reduce administrative load on clinicians. These tools are not replacing medical judgment; they are compressing the time and cognitive effort required to reach it.

Large-scale industry research projects have demonstrated that AI systems can perform strongly in structured diagnostic reasoning tasks, sometimes matching physician performance under controlled conditions. One widely discussed example comes from Microsoft’s medical AI evaluation work, where models performed competitively in diagnostic benchmarks.

However, the same research direction consistently emphasizes a constraint that defines the entire field: medicine is not a structured problem. It is probabilistic, incomplete, and context-dependent. That gap between test performance and clinical deployment remains one of the biggest unresolved issues in medical AI.

The real bottleneck is data fragmentation

If there is a single structural reason why healthcare is difficult to “fix” with AI, it is not model quality. It is infrastructure.

Medical data is still fragmented across systems, institutions, and formats that were never designed to communicate with each other. Electronic health records are often incomplete, inconsistent, and incompatible across providers. This creates a fundamental limitation: even the most advanced AI cannot reason effectively over missing or disconnected information.

Global health organizations have repeatedly identified interoperability and data fragmentation as key barriers to safe AI adoption in healthcare systems. The World Health Organization explicitly highlights the importance of standardized, connected health data ecosystems for effective AI integration. In this sense, AI is not just entering medicine as a tool. It is exposing the limits of medicine’s digital foundation.

A new risk is emerging: over-trust in machine output

As AI becomes more embedded in clinical workflows, a less visible but potentially more dangerous problem is emerging: automation bias. This refers to the tendency of humans to over-trust algorithmic recommendations, even when they are incorrect.

Recent research shows that when AI systems provide explanations alongside predictions, clinicians may perceive incorrect outputs as more reliable than they actually are. This creates a subtle but important shift in error dynamics: mistakes are no longer purely human or purely machine-driven, but often the result of misplaced trust between the two. A recent study on AI explanation effects and clinical decision-making highlights this risk/

The implication is not that clinicians become less skilled, but that the boundary between judgment and suggestion becomes harder to maintain.

Medicine is shifting from decisions to shared inference

One of the deeper structural changes is conceptual rather than technical. Medicine is gradually moving away from single-point decision-making toward shared inference systems, where clinicians and AI models jointly generate a ranked space of possible diagnoses or actions.

This is already visible in oncology, radiology, and emergency triage systems, where AI tools increasingly function as probabilistic advisors rather than deterministic decision engines. Reviews of AI integration in clinical workflows describe this shift as a move toward augmented decision-making systems, where human and machine reasoning are tightly coupled rather than separate.

The result is a quieter but profound transformation in medical authority. The question is no longer just what the correct diagnosis is, but how it is produced.

AI reduces some errors, but introduces new systemic ones

The original promise of AI in healthcare was straightforward: fewer mistakes. The reality is more complex. AI systems are indeed helping reduce certain categories of error, particularly in image-based diagnosis, administrative tasks, and early warning systems for patient deterioration.

However, they are also introducing new types of risk. These include over-reliance on model outputs, hidden bias amplification, and performance degradation when clinicians become dependent on AI assistance. Research on clinical AI usage patterns suggests that human performance can shift depending on whether AI support is present, raising questions about long-term skill retention and system resilience.

This creates an important paradox. AI does not simply reduce error rates; it redistributes them across the system in new forms.

Regulation is lagging behind deployment speed

As AI becomes embedded in clinical workflows, regulators are trying to define what “safe use” actually means. The focus is shifting toward lifecycle governance, continuous monitoring, and post-deployment validation rather than one-time approval.

However, regulatory systems are still adapting to the speed of innovation. Generative AI tools, in particular, are being integrated into clinical environments faster than formal governance frameworks can fully assess their risks and limitations.

The deeper shift: medicine is becoming probabilistic again

Perhaps the most important long-term transformation is not technological but epistemological. For much of the 20th century, medicine tried to standardize itself into deterministic pathways: guidelines, protocols, and structured decision trees. AI is pushing it back toward probability-based reasoning.

Instead of single diagnoses, systems increasingly produce ranked differentials. Instead of certainty, they produce confidence distributions. Instead of fixed pathways, they generate dynamic risk assessments.

In a sense, this is not a regression but a return to how biological systems actually behave: uncertain, variable, and context-dependent.